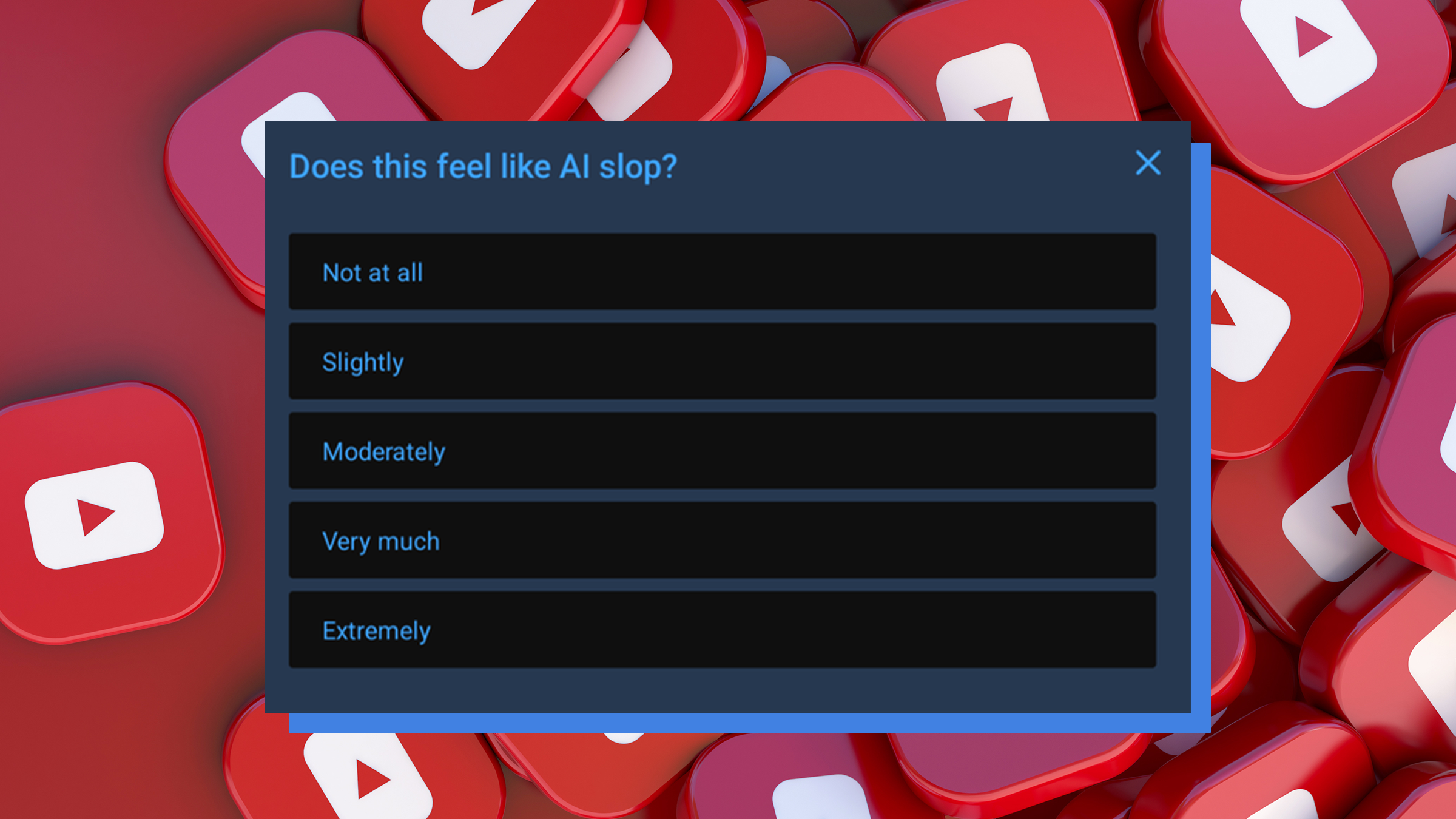

YouTube just launched a new pop-up survey asking viewers if videos look like "AI slop," spurring theories that they're training their own bot. Since Tuesday, instead of asking whether a video was relevant to a search, YouTube now sometimes prompts a user to share where the clip is rated on their personal AI detection meter.

The reactions might sound paranoid if you don't know what other companies have done with your data.

Rate your YouTube slop

Starting on March 17, YouTube watchers began seeing a new popup prompt during their videos. "Does this feel like AI slop?" it asks.

Users can label the video's vibes as anywhere between "not at all" or "extremely" sloppy.

One possible reason for this change is the company's declared intentions to fight the influx of low-quality content generated with large language models (LLMs). In January, CEO Neal Mohan dubbed this a 2026 priority in his annual letter to YouTube.

"It’s becoming harder to detect what’s real and what’s AI-generated," he said. "This is particularly critical when it comes to deepfakes."

"To reduce the spread of low-quality AI content, we’re actively building on our established systems that have been very successful in combating spam and clickbait, and reducing the spread of low-quality, repetitive content."

At the same time, he talked about the expansion of YouTube's own LLM to allow users to create videos and even simple games with text prompts.

This letter came on the heels of a report finding that over 20 percent of YouTube content was AI slop by the end of 2025.

Is YouTube fighting AI or training it?

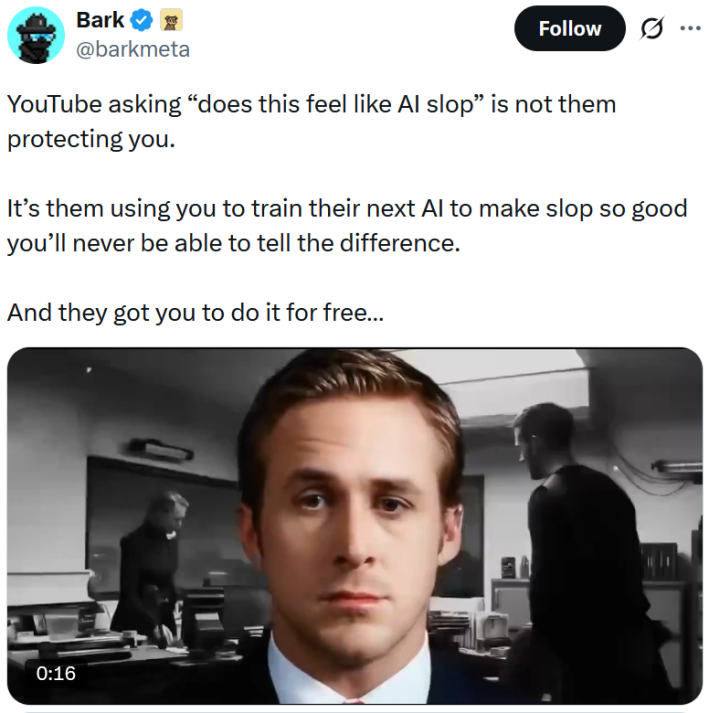

On X, the "everything except CSAM, we promise this time" app, AI-bombarded folks are accusing YouTube of using us not to train its algorithm to suppress low-quality slop, but to train its AI to make better quality slop.

"YouTube asking 'does this feel like AI slop' is not them protecting you," wrote @barkmeta. "It’s them using you to train their next AI to make slop so good you’ll never be able to tell the difference."

"And they got you to do it for free."

"YouTube isn't banning AI slop.. They're making you label it so they can train their next model to not look like slop," claimed @TukiFromKL.

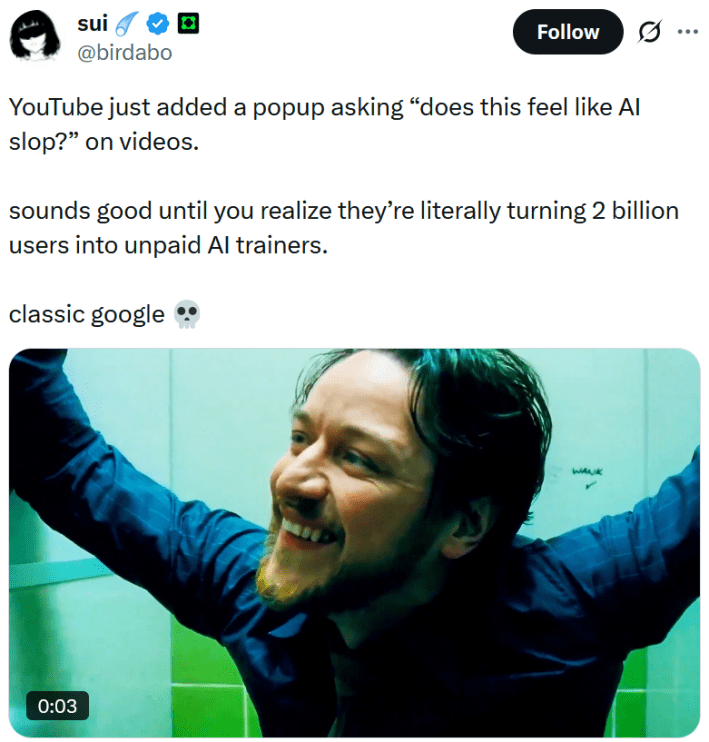

User @birdabo lamented that the new YouTube prompt "sounds good until you realize they’re literally turning 2 billion users into unpaid AI trainers."

A lot of the people fueling this theory are AI fans or even developers themselves. Somehow, they're not fans of taking the labor of others and using it to enrich oneself without credit or compensation.

Regardless, it makes sense that modern internet users would assume that YouTube's slop question is another attempt to make us all unknowingly train an AI. In 2018, we found out that those reCAPTCHA bot detectors were using people's answers and puzzle-solving skills to do just that.

Google, YouTube's owner, also owns reCAPTCHA.

Then, just last weekend, news broke that Niantic used all those cute photos we took on Pokémon Go to train AI delivery bots.

It's no wonder the term "AI paranoia" is starting to become a thing.

The internet is chaotic—but we’ll break it down for you in one daily email. Sign up for the Daily Dot’s newsletter here.